More Than Meets the Mind’s Eye: Physics Students Develop New Techniques to Turn Thoughts Into Pictures

What if you could see what someone else is seeing, without having to take a photo or record their recollection? What if we discovered that our imagination precedes our ability to perceive reality, and not the other way around? What if we could reconstruct entire visual sequences using thought and memory alone?

Two senior students in the Department of Physics are exploring a question that sits at the intersection of neuroscience, artificial intelligence and human imagination: can brain activity be decoded into visual reality? Their senior design project, “Visual Reconstruction with EEG,” is an early but ambitious step toward that future.

On May 8, Isaac Van Benthuysen and Jack Caputo will present their work at Innovation Expo, where students showcase projects that push the boundaries of science and technology.

From dreams to data

For physics major Van Benthuysen, the idea for the project came to him where inspiration often arrives: in his sleep.

“I have very vivid dreams,” he says. “And they really interest me.”

That curiosity led him into research demonstrating that the brain uses the same regions to generate dream imagery as it does to process real-world visual input.

“The brain basically uses the same hardware to create dream imagery as it does to represent what you see in your day-to-day life,” Van Benthuysen explains.

From there, the leap felt almost inevitable: if the brain encodes real-world vision in a measurable way, could that data be decoded, and eventually visualized?

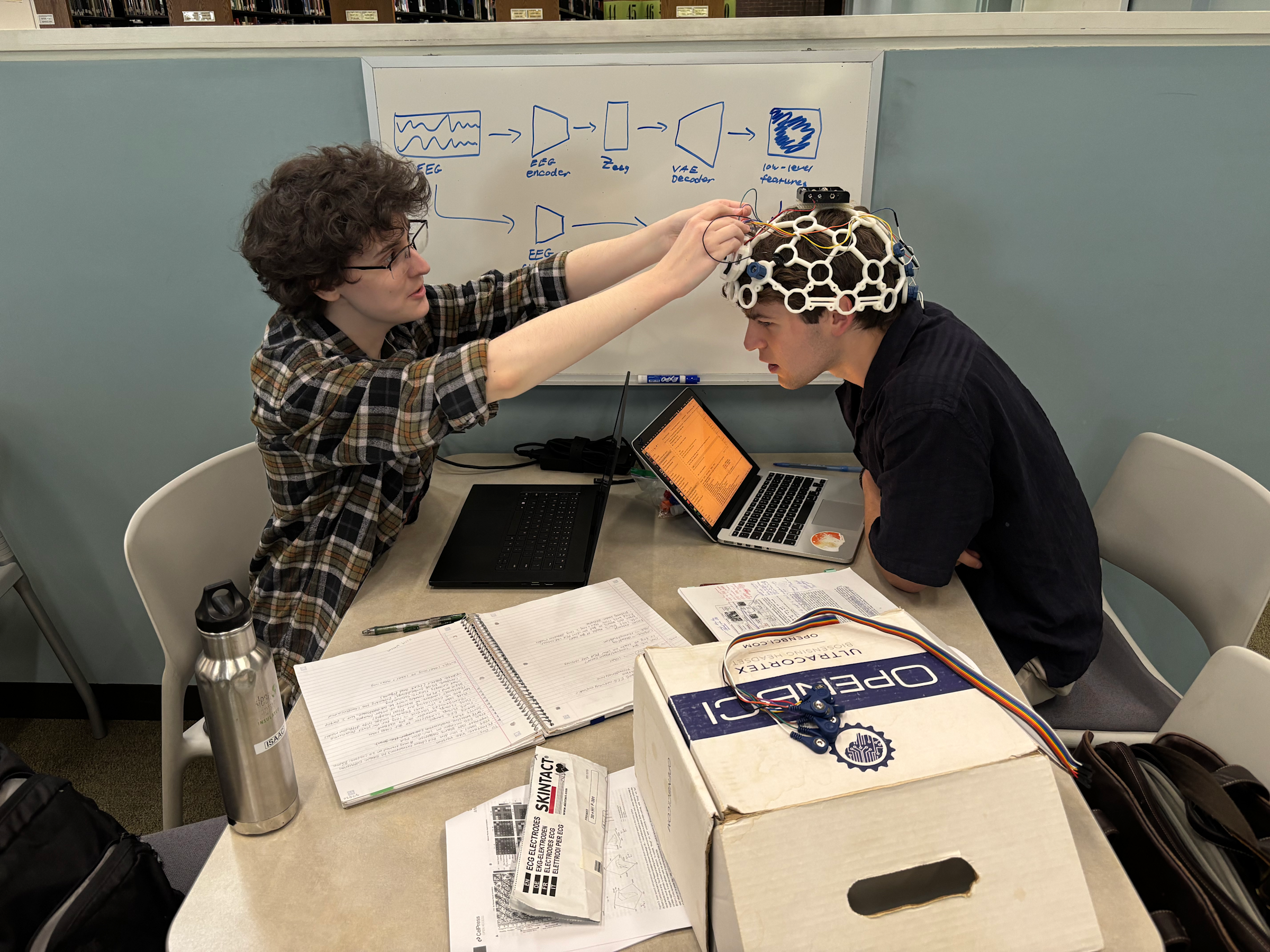

Using common technology for uncommon innovation

Working alongside fellow physics student Caputo, Van Benthuysen set out to explore that question using electroencephalography (EEG): a noninvasive, accessible, and inexpensive method of recording electrical activity in the brain. At a high level, their method works by aligning that electrical data with imagery so the two formats can be seamlessly translated from one form to the other.

Their approach relies on training machine learning models using publicly available datasets, where participants wear EEG devices while viewing images. “We’re recording their brain’s responses to various images,” Van Benthuysen says. “Our goal is to take that brainwave data and then translate that into an image that is as faithful as possible to the original image that the people were looking at.”

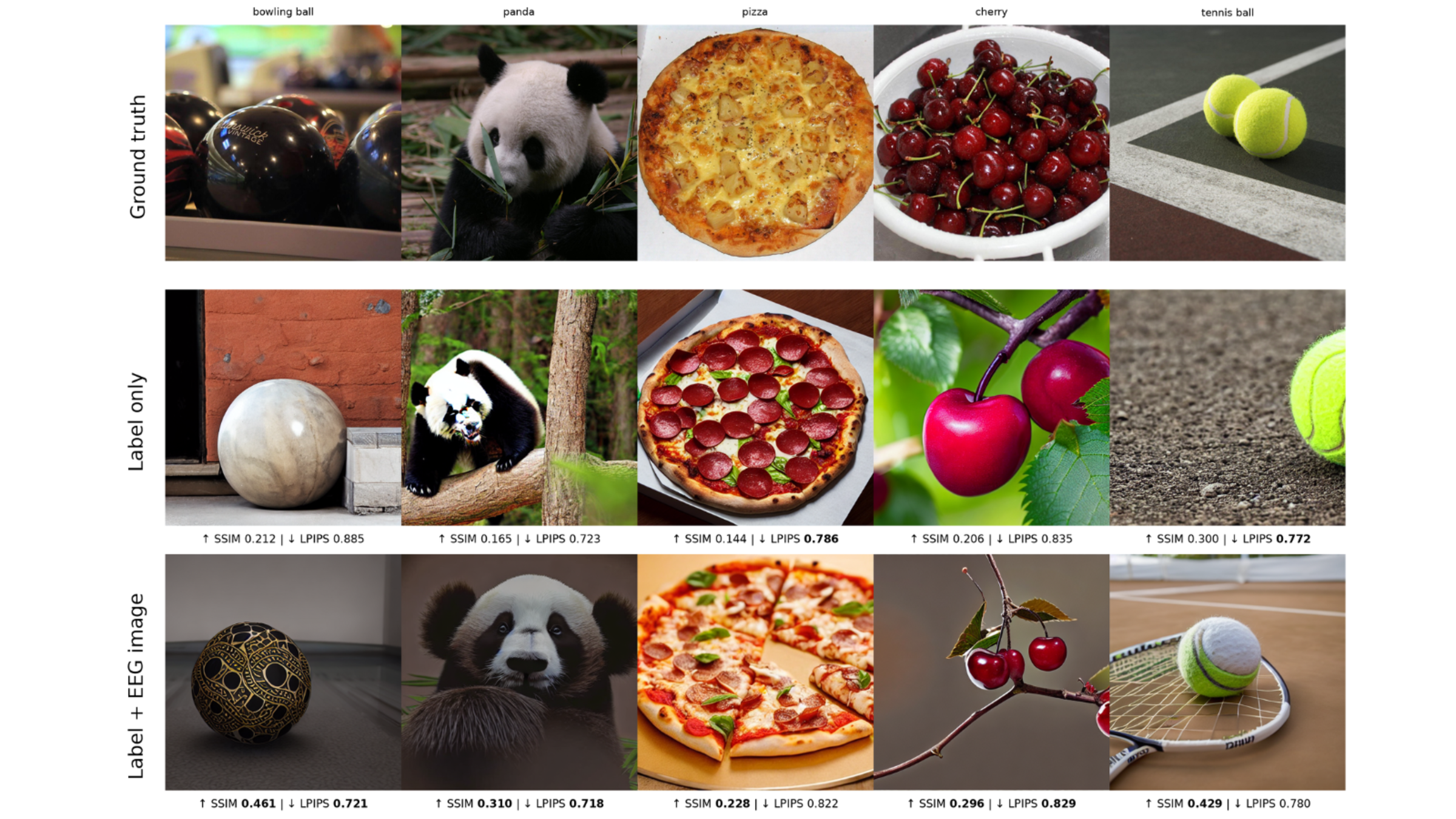

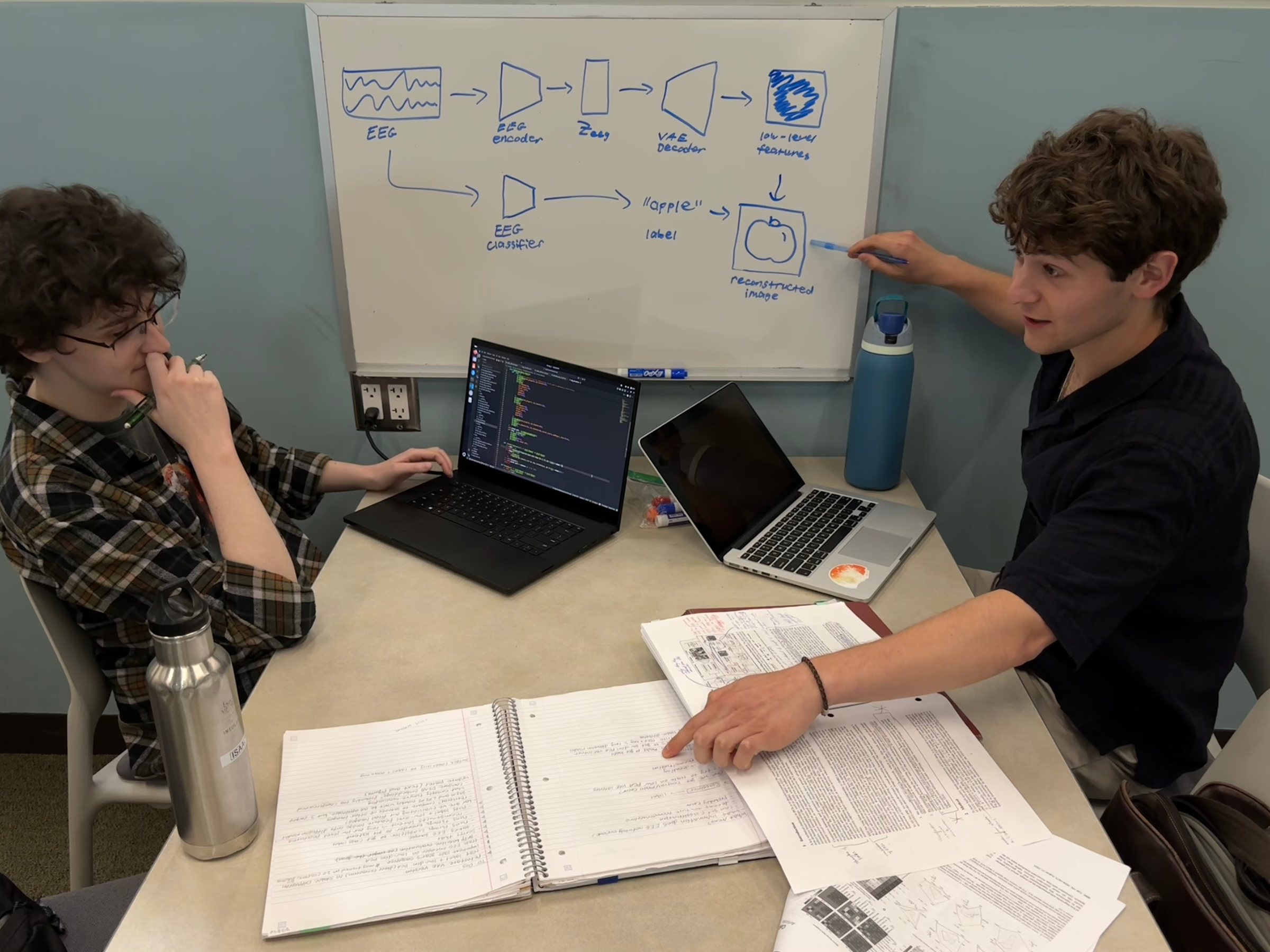

Existing AI models can already convert images into compressed numerical representations (known as “latents”) and then reconstruct them back into images. Van Benthuysen and Caputo’s innovation lies in training a model to translate EEG signals, using a decoder from a pre-existing image generation model, into that same numerical format.

“If we get these two representations to be the same,” Van Benthuysen explains, “we can use the decoder to turn the EEG data back into an image.”

In practice, that means teaching the algorithm to recognize patterns in brain activity that correspond to visual features — color, shape, texture — and reconstruct them into something recognizable.

Creating complexity from a simpler signal

But, there’s a fundamental limitation: EEG data is incomplete.

“EEG data is very sparse,” says. “The electrodes are far apart and it can’t really get detailed information about what’s happening in the brain.”

While the human brain contains roughly 86 billion neurons, EEG captures only a fraction of that activity, limiting the level of detail the system can reconstruct. As a result, current outputs are far from photorealistic. “Right now it will still be a pretty blurry…kind of blobs of the general image,” he says.

To improve results, the team integrates additional machine learning methods, including classification models and generative AI systems. First, their model predicts what category an image belongs to, such as identifying whether a subject is an “apple” or a “person.” Then, they combine that label with visual features extracted from EEG data. That’s where techniques like diffusion models come in. Diffusion is the mechanism behind most AI-generated images that we currently see day-to-day.

We only see what we can measure

One of the most important aspects of the project is its reliance on EEG rather than more precise but less practical technologies like functional MRI (fMRI). Van Benthuysen and Caputo explain that the quality and specificity of the images will improve exponentially as measurement tools become increasingly refined, fMRI becomes more accessible, and data from different types of brain imaging can be combined

“fMRI has really good spatial resolution,” Van Benthuysen says. “But it’s expensive, not portable…whereas EEG is basically like a fancy hat.”

That accessibility makes EEG especially promising for real-world applications. Researchers are already exploring ways to combine EEG with other technologies to overcome these limitations.

“If you can match EEG data to fMRI data,” Caputo explains, “then your EEG could, in principle, be used for much, much better reconstruction.”

A vision for something much bigger

While the team’s current work focuses on reconstructing static images, the implications extend much further. With improvements in data quality and modeling, similar systems could one day reconstruct everything from memories to psychedelic journeys to what the mind sees in a dream, a coma, or even at the moment of death.

“If you have enough data,” Van Benthuysen says, “the model could theoretically reconstruct anything visualized, regardless of whether or not it was something it was trained on.”

That possibility raises profound philosophical questions. Where does perception end and imagination begin?

For now, their system produces imperfect images, but Van Benthuysen and Caputo are already seeing improvements as they evolve their research methods. The project is still in its early stages, but it captures something essential about the spirit of the Innovation Expo: curiosity, ambition and the willingness to explore ideas that feel just out of reach.

After all, we can only experience what we can envision. To go beyond our present limits, it just takes a little imagination.