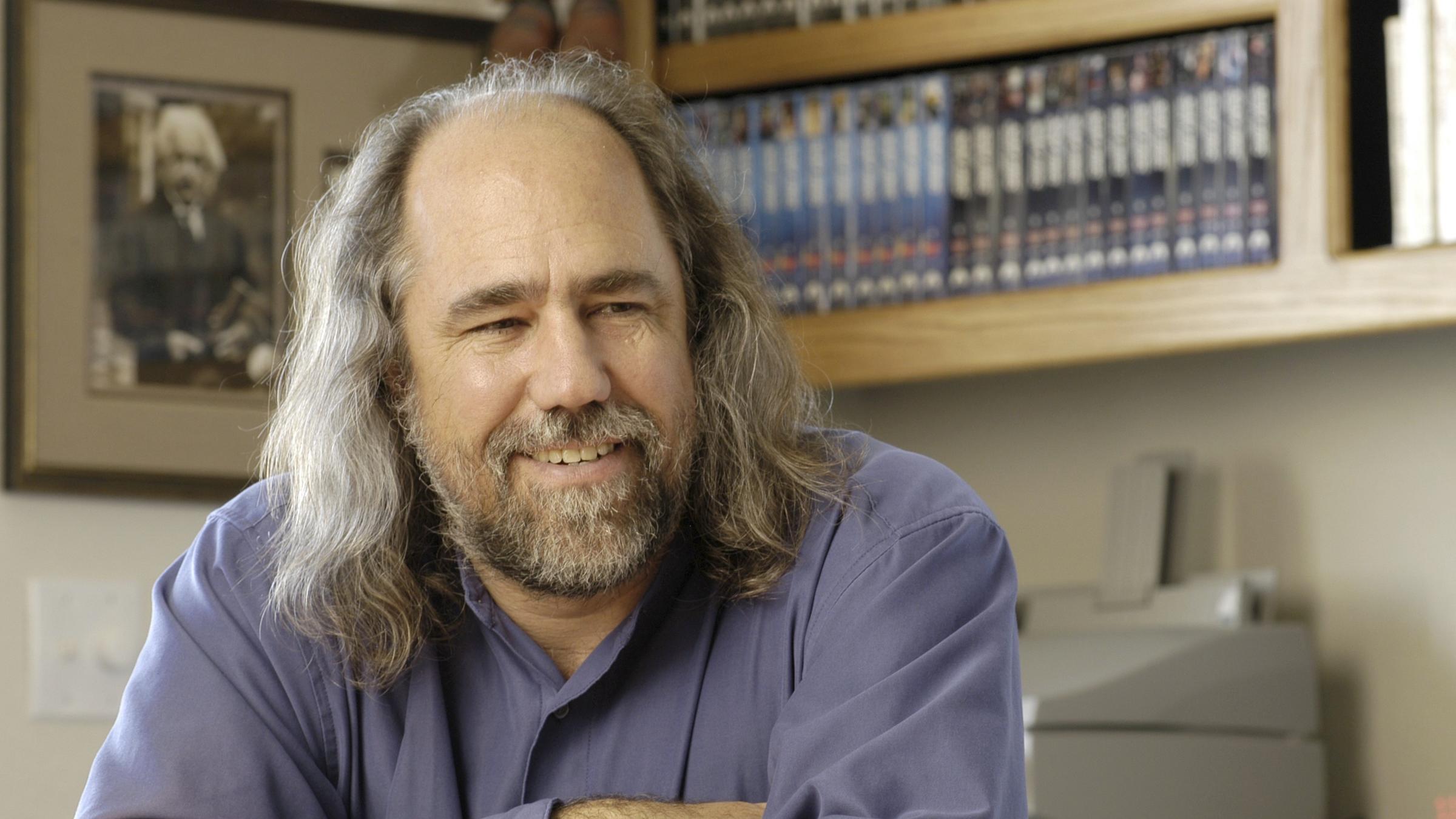

IBM Watson's Chief Scientist Shares Glimpse of the Future of Artificial Intelligence

Using a systems engineering approach, Grady Booch works at the intersection of computing and what it means to be human

We have all seen the commercials where a consumer product with a certain name plays a song or helps with a homework question upon voice command. They have grown in popularity among consumers because they offer users lots of convenience. But these products are just the beginning of artificial intelligence (AI).

In a webinar entitled, "What is the Self?," Grady Booch of the IBM Almaden Research Laboratory, offers a glimpse of where AI is headed. The webinar, part of the SERC Talks series, is sponsored by the Systems Engineering Research Center (SERC), a University-Affiliated Research Center (UARC) of the U.S. Department of Defense operated through the School of Systems and Enterprises (SSE) at Stevens Institute of Technology.

As a user of several widely available request/response type, or cognitive assistance AI technologies, Booch appreciates the value they bring. “Those are powerful kinds of things, but they're not enough,” he says.

“Embodiment is a requirement for true intelligence,” says Booch. He explains that embodied cognition AIs are physically in the world, have a sense of the world and can manipulate it. “They are systems that can reason and learn beyond just the response/reacting, and, this one is little bit controversial, we intend for them to have identity.”

Taking a higher form

Imagine you are in a high-rise building looking for the elevator to get to an important meeting on the 21st floor. You ask the person closest to you where the elevator is located. They don't say anything, but simply nod in the direction where you can find the elevator. This form of communication without using words is subtle, and precisely what is being sought with future forms of artificial intelligence (AI), asserts Booch.

The kind of AI needed for subtle communication such as described above, embodied cognition, is a higher form of AI. It goes beyond playing a song, finding an answer on a search engine, or even ordering pizza tailored to your personal tastes.

“Imagine taking Watson and literally putting it in the physical world,” says Booch, who is also a chief scientist for Watson/M at IBM Research. “Give it eyes, ears, and touch, then let it act in that world with hands and feet and a face, not just as an action of force but also as an action of influence.”

By taking the ability to reason and learn, and putting it in a number of form factors, such as a robot or avatar, AI is drawn closer to the ways humans work and live.

Approaching AI challenges as a systems engineer

If the title of the webinar, "What is the Self?," seems a little existential, it is because scientists like Booch are working at the intersection of computing and what it means to be human.

The architectures of cognitive assistance AIs like Alexa and Siri are based on a programming model. While embodied cognition offers more than request/response behavior and conversation. They are not programmed, but rather they learn and have the ability to reason.

“As we start building systems that have emotional affect, that have the ability to recognize your emotions and indeed have their own personality, systems that have not just reaction, one of the implications is that now we are dealing with systems that look creepily like us,” he adds.

So how can computers be developed to be better learning systems?

And what happens when computers get to be better learners and need to interact with people?

In pursuit of answers, Booch approaches AI challenges as a systems engineer. Having spent much of his career advancing the fields of software engineering and software architecture, including co-authoring Unified Modeling Language (UML), Booch is in a unique position to appreciate the value of systems engineering programs at SSE for future AI problem solvers.

As a systems engineer, he looks at many AI aspects, as opposed to looking at it as an AI problem that needs to be engineered properly.

Building software intensive systems that are taught, and then they learn, has deep implications in the software development lifecycle, especially when considering systems of systems, asserted Booch.

“Trying to understand, debug and modify a system that learns is a pretty gnarly problem,” he says.

The Systems Engineering Research Center (SERC) is managed by Stevens Institute of Technology. A U.S. Department of Defense University Affiliated Research Center (UARC), the SERC consists of a network of 22 prominent research institutions and over 200 researchers from across the nation focused on enhancing the nation’s knowledge and capability in the area of systems engineering thinking to address critical global issues.

You can view the entire webinar on the Systems Engineering Research Center Youtube channel. For more information on SERC Talks, visit the SERC website.