Biomedical Engineering Professor Receives $622,287 NSF CAREER Award to Personalize Virtual Reality Interfaces for Motor Skills Therapy

Raviraj Nataraj’s research leverages a patient’s physical and mental states to improve training speed, effectiveness and outcomes

Realistically, how many times do you think you could repeat the same action — such as moving a pen from one spot on your desk to another — with little variation?

What if your natural motions were limited? Or if simply holding up your arm required additional physical and mental effort?

Physically, mentally and emotionally, how many times do you think you could do it? Fifty times? Maybe 100?

For patients with brain or spinal cord injuries, physical therapy to recover the voluntary movement, or motor, function needed to conduct everyday activities can involve repeating such motions from hundreds to tens of thousands of times spread over a period of weeks, months — even years. Although designed to ultimately improve a patient’s independence and quality of life, the stress, strain and time commitment required can be grueling.

So how do you motivate a person to show up and do yet another set of exercises when the process is so fatiguing to the body, mind and spirit?

In recent years, incorporating gamification and virtual reality (VR) has become more prevalent in motor therapy, using the fun and incentives of gaming and the immersive experience of virtual reality to engage patients to participate in their therapy regimen for longer periods and more repetitions.

But highly realistic graphics and high score incentives cannot solve the problem alone. In fact, the use of VR motor therapy does not always achieve better outcomes than traditional methods or ensure patients gain results any faster than before.

The missing piece, says Assistant Professor of Biomedical Engineering and principal investigator of the Movement Control Rehabilitation Laboratory Raviraj Nataraj, is personalization. Or more specifically, personalization of a VR therapy platform based not only on how well a patient is performing, but how well the person is feeling.

“It's great that you can encourage and incentivize people to do more repetitions. But I see VR platforms as being so customizable, there must be a way in which we can also get better gains in function for the same number of repetitions,” he said.

Nataraj was recently awarded a $622,287 National Science Foundation CAREER Award to attempt just that.

Titled “Personalizing Sensory-Driven Computerized Interfaces to Optimize Motor Rehabilitation,” the five-year project seeks to develop improved methods of VR motor therapy that are more personalized to, and therefore more effective for, the individual by leveraging both their physical and mental states.

Working on the Stevens campus with spinal cord injury patients from the James J. Peters Department of Veterans Affairs Medical Center in the Bronx, New York, Nataraj’s research will explore how adapting the training difficulty level and augmented sensory feedback that provides visual and haptic (touch-based) guidance cues in real time affects patient performance when based on objective measures like the user’s physical readiness and subjective measures of personal well-being.

“This feeling-better component is critical to ensure that they're willing to do more repetitions and repeat sessions,” Nataraj said. “That’s the second level that people usually ignore in customizing these systems. And that's what's giving our system that extra layer of personalization that I think should improve clinical retention.”

As an educational component, the project will include training high school students to develop VR rehabilitation applications customized to the needs of specific individuals with spinal cord injury.

Perfecting personalization

Nataraj’s VR training platform centers on two levels of personalization implementation.

The first is based on what is referred to as a disability archetype; namely, whether the user fits into one of three combinations of physical and cognitive impairment: neurotypical, physical injury with no cognitive impairment or physical injury with cognitive impairment. The user’s initial customization settings in the VR platform are based on this archetype.

The second level of personalization flows from the first as the VR system adapts to the user based on their progressive uses of the system. The adaptation parameters Nataraj is focused on are the difficulty or complexity level of the training task and the nature of the guidance feedback provided during training — specifically, whether it is more visual or more haptic.

“We're trying to see whether or not there are repeatable patterns in physiological and perceptional responses based on the person's disability archetype and if they respond uniquely to people who have a different archetype,” he said. “But once we collect enough of that information, there should be a database from which we can pull and come up with an adaptive system such that we can really fine-tune these parameters for each individual user.”

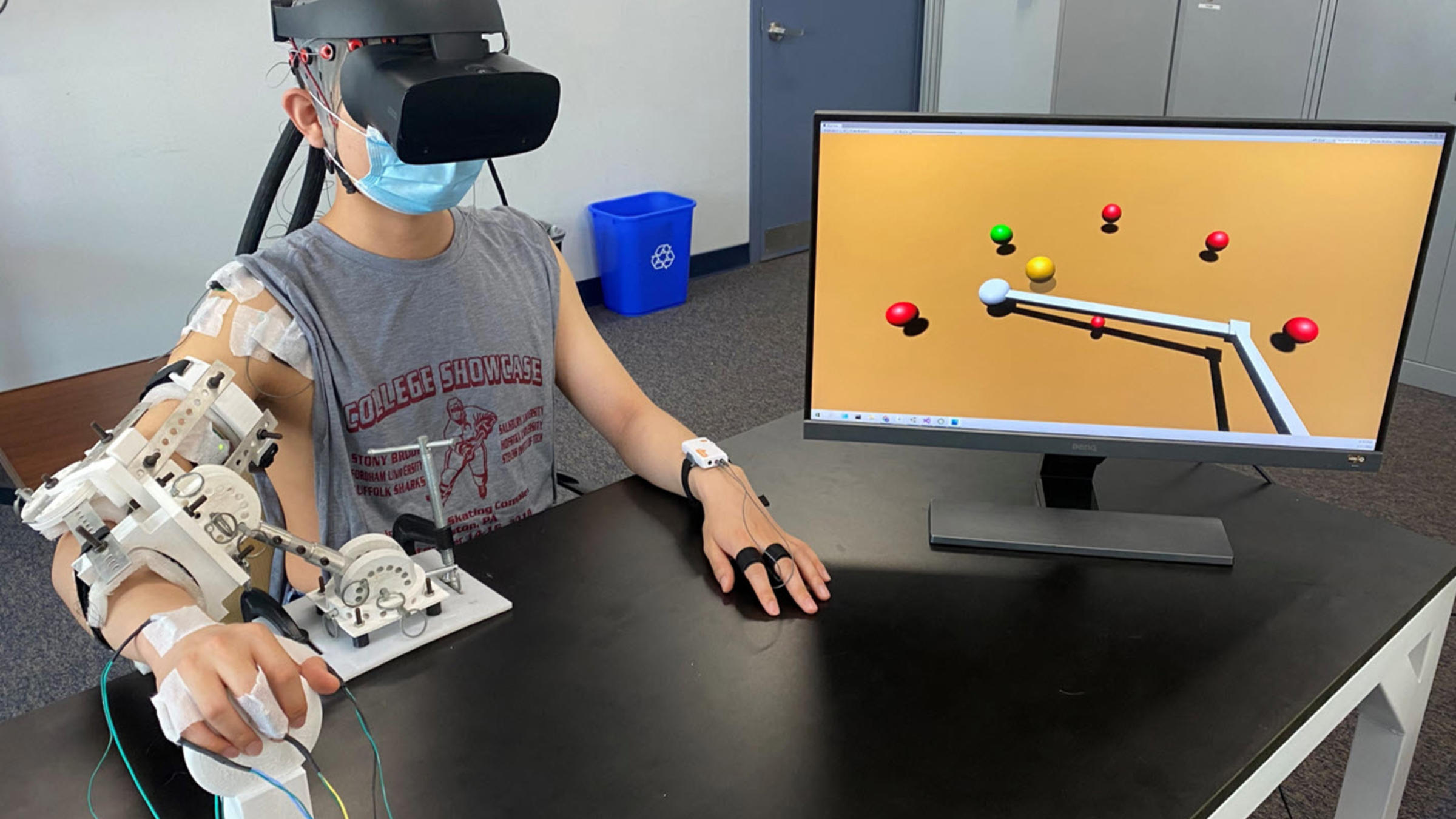

Wearing a VR headset and a supportive arm brace that provides movement resistance while reducing the fatigue of holding one’s arm in position, users play a VR-based game in which they control a virtual surrogate arm to reach and move an object from one location in the virtual environment to another.

“Because these individuals have spinal cord injury, they have a limit in the number of positions they can assume and how much muscle capacity they have. That's why it's that much more important to make sure that whatever training we give them is focused and better at ensuring gains,” Nataraj explained. “So we have a platform that allows them to maintain various arm positions, and, under the guidance of a physical therapist, we can select these positions to exercise particular muscles at different lengths. It's also synchronized with this virtual reality system that allows them to develop coordination as part of these gamified settings. This combination allows them to get a very unique training experience that they wouldn't otherwise have.”

Although physically resisted from making larger gestures, the user generates an intention to move through muscle-activation signals that are measured by sensors in the arm brace as they push against it. These signals — such as the intention to move forward, left or right — are decoded by a machine learning algorithm to control the virtual surrogate arm while the user simultaneously engages their real-world arm in isometric muscle training.

Vibration motors in the arm brace deliver haptic feedback to guide the user on how far to move and in what direction, while visual guidance is provided via the virtual environment to direct the user toward the most optimal path for completing virtual reaching tasks.

“For visual guidance, we actually provide a second, ‘ghost’ avatar that you need to match your primary avatar to. The more you deviate from that path, the more that virtual avatar shows up, and you need to work harder to make the two converge,” Nataraj said.

Such feedback cues can help the user improve coordination, strength and muscle control. They also provide a major advantage of VR motor therapy over traditional therapies, beyond the fun factor.

“Not only can we gamify it in such a way where it's more enjoyable, but we can give you more information through sensory feedback cues that guide you to do the movements better and learn faster,” Nataraj said. “That's something that you only gain with a more technological interface compared to traditional therapies.”

Objectivity and subjectivity

While classical physical therapy relies on the user or physical therapist consciously determining whether to adjust the difficulty of a task up or down based on their subjective perception of the patient’s performance, VR therapy can automate the process based on metrics of how the user is responding both objectively and subjectively in real time.

“We measure a host of physiological signals in the brain and in the muscles, including heart rate, eye tracking, electrodermal activity — which is essentially your sweating or perspiration while undergoing the therapy,” Nataraj said. “All these things are indicators at a cognitive level of how much you're being loaded, whether you're being emotionally aroused or have higher attention.”

These objective metrics help an adaptive control system determine whether it should adjust the level of task difficulty up or down, provide more or fewer feedback cues, and whether such cues should be more visual or more haptic. Such adjustments optimize the system to keep the user engaged longer and doing more repetitions while improving their overall performance. Repetition after repetition, the system, like the patient, learns how to perform a little better.

“The adaptive controller continues to get information about how the person is feeling and how the person is doing, and, with each iteration, it should be making a change in the parameters that improve how the person is doing and how the person is feeling,” said Nataraj. “If for some reason it's going in the opposite direction, then the adaptive control system learns that and starts moving in the other direction.”

Monitoring these variables can also improve results by informing researchers and the user of what not to do.

“If it looks like they're exhausting themselves at one difficulty level, we need to make some kind of modification to either bring the difficulty level down or even to let the person know that maybe it's time to take a break,” Nataraj said. “That's not fundamentally what we're looking to do, but this gives additional levels of information that are more nuanced so the user can feel good about continuing to move forward without overloading both mentally and physically.”

Nataraj and team will also gather subjective feedback from users via surveys, asking such questions as, “To what extent do you feel like you're controlling the system,” “To what extent do you feel like you're doing well” and “To what extent do you think this is useful?”

These perception-level questions, he said, indirectly affect task difficulty level while prioritizing well-being and fostering an increased sense of agency — all of which should improve both task performance and overall retention.

“It doesn't matter how much potential the technology has: if people don't like these computerized devices or don’t see the utility in what they’re doing, they won't use them,” Nataraj said. “So we're trying to come up with a more universal way to consider a lot of things at once — not only how they're doing, but how they're feeling both physiologically and perceptionally. Hopefully, we can come up with a network of interactions across those dimensions that will accelerate people performing better and maybe feeling like it's easier while they do it.”

Progressive improvements

The NSF project develops progressively from Nataraj’s previous rehabilitative device and sensory feedback research, including a smart glove platform that leverages a user’s sense of agency through visual, audio and haptic feedback to perform more effective reaching and grasping movements.

The breakthrough made during that study, in fact, said Nataraj, was discovering that by providing guidance feedback, even to able-bodied users for whom such movements were easily performed, users were able to learn to perform better faster, within just a few repetitions of training.

“Some of those ideas we're leveraging as part of this platform,” he said. “That work definitely laid the foundation of feasibility for what we're doing now.”

Additionally, the NSF project will expand an earlier outreach initiative in which high school students from under-resourced school districts will be recruited as part of the Stevens Accessing Careers in Engineering and Science program to learn the fundamentals of programming for developing VR environments using a language called UNITY.

In the first phase of the program, Nataraj and team will provide weekly guidance to the students on how to develop an application specific to a disease of their choosing. In the second phase, each student will be paired with someone with spinal cord injury to apply the skills they’ve learned to develop an application that is custom-built to serve that particular person’s everyday needs.

Some students may have the opportunity to present their work at summer camps held at Stevens or even enter their apps into science competitions.

“We actually had one student who placed first at WESEF [the Regeneron Westchester Science and Engineering Fair] in computer science last year,” Nataraj said.

Intelligent adaptation

At present, Nataraj’s platform focuses on training users to perform basic reaches in particular directions from different initial arm positions better — a feat, he is quick to point out, that “is actually a big step forward from what they're able to do with platforms that are otherwise available.”

In the future, he hopes to iterate the platform to enable users to learn to perform more and more complex movements, including recruiting different muscles in different combinations.

Nataraj said his sensory-driven training platform could also easily be adapted for other research and industrial purposes — such as remote-controlled robots or vehicles — that could benefit from having more intuitive control of a device via a virtual surrogate.

“It's the same sort of adaptive framework that can be used for all these different applications, where we're adapting the training in such a way where we're monitoring the user, how they're feeling, how they're doing, so as to customize the training so they can learn to operate a surrogate better, faster and sooner,” he said.

The framework could also be used in everyday devices such as smartphones or smart wearables, such as customizing an interface’s font size or text placement in real time based on a user’s level of focus or fatigue.

The goal, said Nataraj, would be “to figure out ways you can change the interface to make the app more user-friendly and do it in such a way where the person feels greater agency and performs better with it.”