Ye Yang’s Research on Crowdtesting Garners ACM Distinguished Paper Award

SSE professor’s findings improve the cost effectiveness of crowdtesting.

The International Conference on Software Engineering (ICSE) was all set to hold its 42nd International Conference on Software Engineering in May 2020 in Seoul, South Korea, until the pandemic brought it to a halt.

For Ye Yang, an associate professor at the School of Systems and Enterprises, the disappointment of not attending this important conference in person was lessened by the great news that she and her colleagues had been awarded the ACM Distinguished Paper Award at the conference when it took place online in July. This is the 2nd time Yang and her collaborators have won this prestigious award; the first was at ICSE 2019.

ICSE is recognized as the top conference in the field of software engineering. Dr. Yang was among more than 1,900 who participated in the conference, many of them researchers, practitioners and educators eager to hear about recent innovations, research and trends in software engineering. Distinguished Papers represent the very best contributions to the ICSE Technical Track and are awarded to up to 10% of the accepted papers.

Pilot Study Points to Potential Cost Savings for Software Crowdsourcing Practitioners

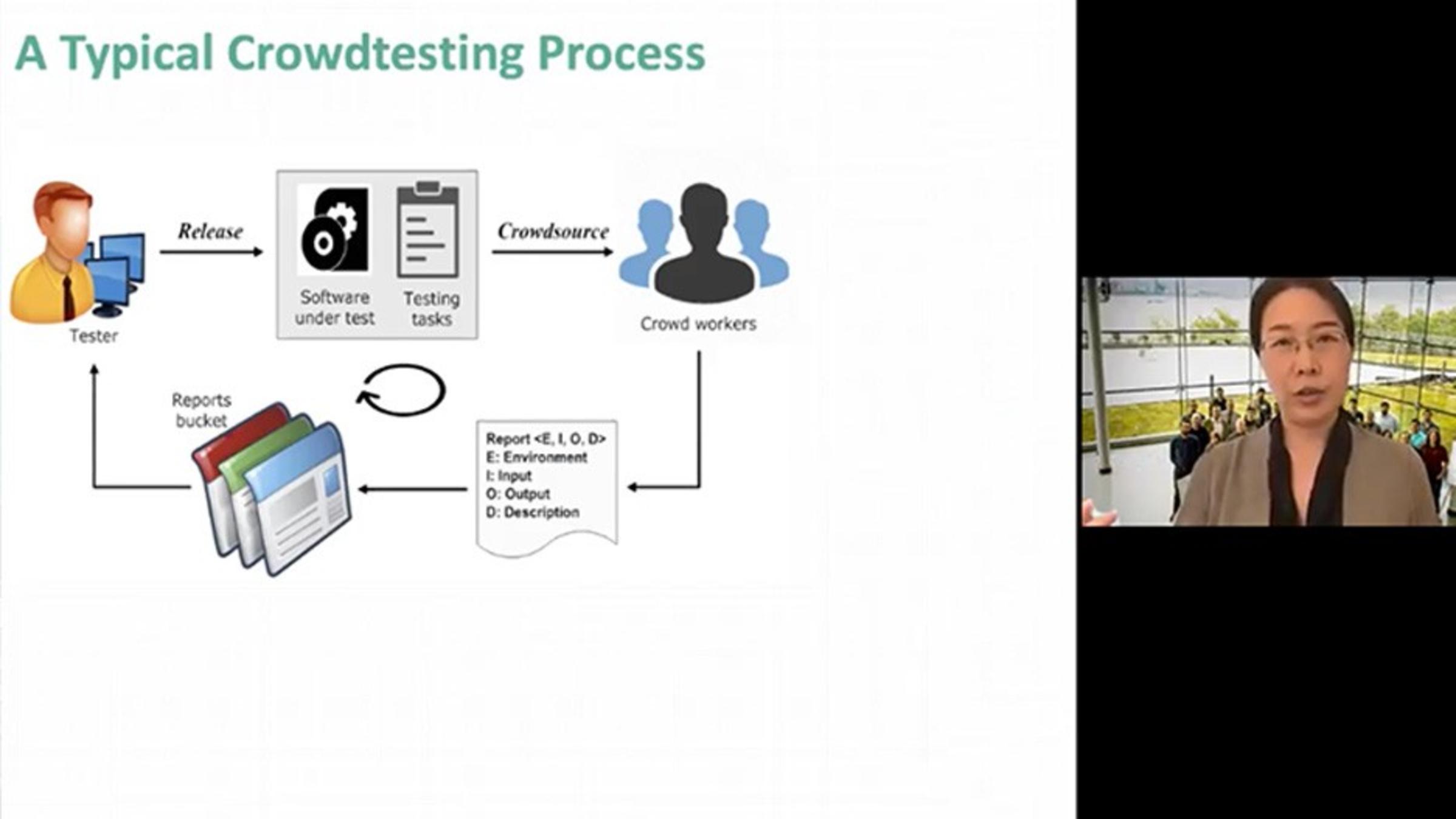

Her research paper, “Context-Aware In-Process Crowdworker Recommendation,” describes a pilot study that reveals the prevalence of long-sized non-yielding windows—i.e., failing to discover new bugs in consecutive test reports during online crowdtesting processes, an emerging paradigm for mobile applications testing.

“Our approach, iRec, will potentially allow mobile applications to be tested with greater cost efficiency, through identifying and optimizing open participation of crowd testers,” she said.

Sharing Research Findings with the Stevens Community

The Stevens community gained insight into the methodology when Dr. Yang presented highlights of the research at the SSE Dean’s Virtual Seminar Series on September 23. For the online audience, she described the steps that led to a context-aware in-process crowdworker recommendation approach, iRec, to dynamically characterize, learn and rank a diverse set of capable crowdworkers, in an effort to detect more bugs earlier and potentially shorten the non-yielding windows.

“Congratulations to Dr. Yang and her colleagues for this well-deserved recognition for research that addresses a critical need in the software engineering field,” said Yehia Massoud, dean of the School of Systems and Enterprises.

“Our main contribution from the study is that we provide a context model of a finer granularity, which is time-sensitive and case-sensitive, compared with the existing techniques ,” Yang said, adding that she is looking toward a future in which artificial intelligence is leveraged to guide human computations in the crowdsourced software engineering community.

“One example we are currently working on is trying to learn from the current testing wisdom and being able to predict the buggy features for a new mobile app,” she said.

For the millions of users who get frustrated with buggy apps, Dr. Yang’s work is a source of good news.