Stevens works with Autonomous Healthcare to develop NASA-supported technology that monitors heartbeat and breathing with radar, cameras and algorithms

Astronauts, athletes, hospital patients and other require constant monitoring of vital signs to watch for signs of dangerous health problems. That means strapping cardiac and respiratory sensors directly to the chest, wrist or abdomen, sometimes with wiring — an uncomfortable and restrictive solution.

Some sensors can estimate certain vital signs by sampling and viewing small changes in light reflection as blood flows; but these solutions need to be worn at all times, are battery operated and require constant contact with skin.

Stevens electrical and computer engineering professor Negar Ebadi and her Ph.D. student Arash Shokouhmand, working with a Hoboken-based health technology company, has a better idea — and the nation's NASA space and aeronautics agency has agreed to support its development.

"There is a need for monitoring crew, especially for exploration missions beyond low-earth orbit," says Ebadi, who advises Shokouhmand and collaborates with Hoboken-based Autonomous Healthcare on the research.

"We decided to work together to address this challenge."

Scanning, beam-forming, data-integration technologies

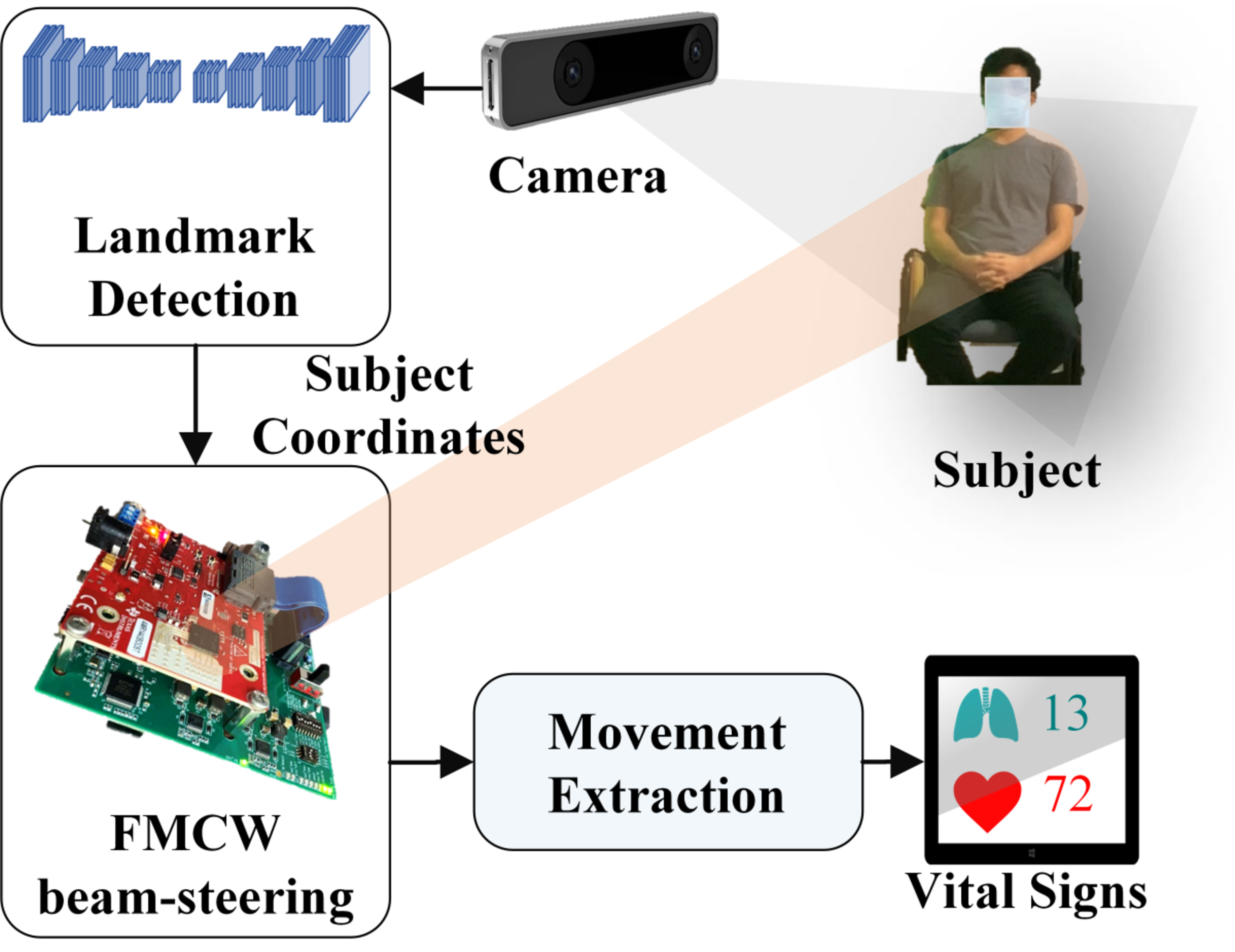

The proposed system incorporates both scanning and 3D color camera images to produce accurate vital signs, individually identified, in real time. The collaboration leverages Ebadi's expertise in developing radar-based systems and Autonomous Healthcare’s expertise in developing AI-based computer vision and sensor fusion-based technologies for healthcare.

A special "continuous-wave" radar scans the body continuously while also imaging it in 3D with a color/depth camera. The system identifies landmarks on the human torso using a type of AI known as a convolutional neural network, constantly correcting the focusing radar beam in order to obtain the best possible estimations of heart and respiratory rate.

"We perform beamforming and beam-steering, based on a one-to-one mapping between the camera and radar coordinate systems.

"This method provides coordinates for automatic, real-time interaction between the camera and the radar," explains Ebadi, "and to our knowledge it is the first work applying this to vital-sign monitoring."

After imaging the changes in those body regions, additional algorithmic methods calculate the likely respiratory rate and heart rate in real time using a technique Ebadi and Shokouhmand created that they call "singular value-based point detection" (SVPD).

"The SVPD method gives additional estimation power to the system," she says.

SVPD automatically locates the exact point representing each micro-movement of the chest as a result of heartbeats or breathing in 3D space through a search algorithm that leverages the ratio of each dominant value sampled to each second-dominant value sampled — once again, in real time.

That information, she explains, is then used to better train the focus of the antenna beam.

High monitoring accuracy in early tests

To simulate spacecraft crew conditions, Ebadi tested the technology with individual subjects as well as various groups of multiple subjects doing simulated tasks from different angles and at various distances while the radars scanned and imaged them.

"Sometimes they tilted their bodies toward or away from the camera-radar system, at different angles, to determine how well the system worked under various conditions," she explains.

During early testing, respiratory rates of subjects were accurately estimated more than 96% of the time, in most cases, while heart rates were accurately estimated more than 85% of the time in every case.

After tweaking the software to focus more specifically on upper chest and abdomen regions, the accuracy improved even further.

"One advantage of the combination of radar and camera system," notes Ebadi, "is that it leverages advantages of both."

Another key to the system's broader potential is that it ties the data collected to each specific individual being monitored.

"Radar systems alone still cannot yet recognize individual people from data, but the integration of a camera system and AI in our systems adds facial recognition and allows the system to know at all times which individual in a group has, for example, suddenly experienced a racing heart or stopped breathing."

Another beneficial Stevens-industry partnership

Autonomous Healthcare, the partner firm in the research, develops AI-based technologies for critical care and patient monitoring.

The company's goal, says co-founder and CEO Behnood Gholami, is to commercialize the vital-sign monitoring technology both for space applications and clinical applications in remote patient monitoring here on Earth.

"We are developing the next generation of clinical monitoring systems on the edge," says Gholami, who serves as principal investigator for the NASA-funded project.

"All computations in this system are done in real-time, and without internet connectivity. It is not feasible to assume you will have access to a powerful server when the system is used on a spacecraft with power and weight limitations."

The joint project, says Gholami, is a stellar example of university and industry collaborating to develop innovative technologies that benefit all.

"Stevens," he says, "has been the perfect academic partner for us."

Autonomous Healthcare research engineer Samuel Eckstrom also contributed to the research and a journal paper.