Improving Human-Robot Interaction Relies On Mimicking Human Behavior

Electrical and computer engineering professor Yi Guo investigates how humans behave in crowds in order to design robots they will trust

In futuristic movies, robots have replaced humans in customer service roles across society. But if you encountered a robot in a crowded airport terminal today, and it told you to step back or to turn around and walk in a different direction, would you?

Why? Or, perhaps more importantly, why not?

"It's difficult to trust other humans," Stevens Institute of Technology electrical and computer engineering professor Yi Guo said. "It can be even more difficult to trust a machine."

But building trust into the design of robots is necessary if they are ever to be accepted as a part of everyday human life. The key to building this trust, Guo says, is prioritizing human comfort.

Also founder and director of the Stevens Robotics and Automation Lab, Guo integrates human behaviors into robots' programming to create optimal human-robot interactions.

Funded by a $358,000 grant from the National Science Foundation, Guo's current research centers on identifying and isolating real-life pedestrian behaviors to train autonomous mobile robots to navigate, move, and act appropriately in crowded human environments.

Building a human-friendly robot

Traditionally designed mobile robots, Guo said, move in a way that is unacceptable in polite human society.

Designed to prioritize collision avoidance, speed, and efficiency, such robots "treat stationary obstacles and dynamic obstacles as just obstacles. It doesn't matter to the robot if it's a human or an object." A pillar, a plant, and a pedestrian are all treated with equal consideration.

Thus, the path determined by a traditionally designed robot may indeed be fast, efficient, and successfully avoid collision; however, it might also require cutting in front of moving pedestrians, whizzing past too closely or too quickly for human comfort, or effectively pushing people out of the robot's way.

Unsurprisingly, humans respond poorly to this kind of behavior. In order for robots to assimilate into the world of humans, they must be taught to act appropriately.

With this goal in mind, Guo and her team employ novel machine learning methods to develop computational intelligence algorithms to train their robots to behave more like humans. This research, in part, builds off her previous NSF-funded research using robots for crowd management in emergency situations.

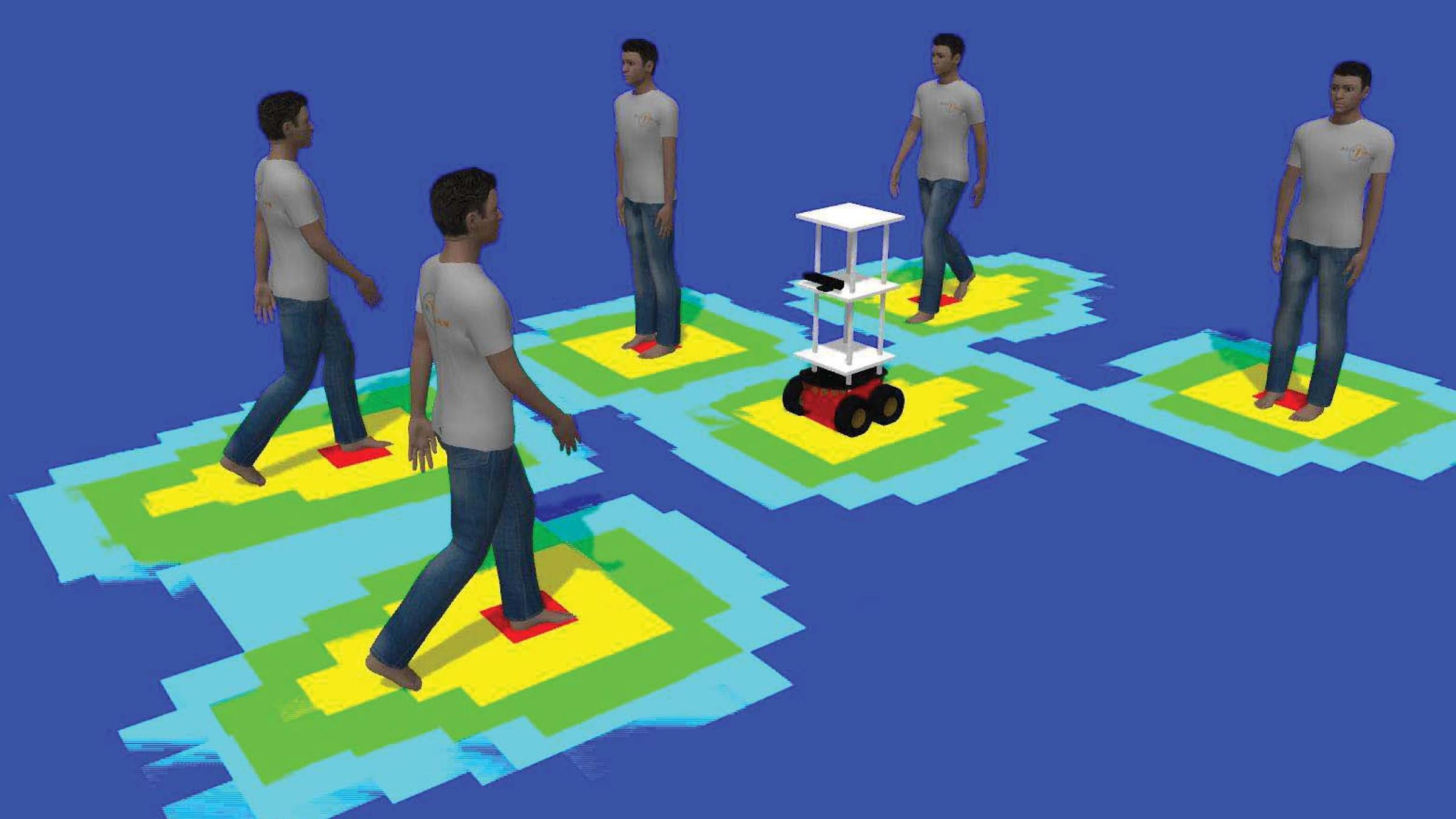

Using existing open data sets of 10 months of human mobility trajectory data gathered in shopping centers by Japanese researchers, Guo identifies patterns of pedestrian navigation behaviors and extracts social affinity features that map how individuals in a crowd move in relation to one another. These behaviors are then used, via an imitation learning algorithm, to train a deep neural network that ultimately helps the robot decide how to plan and navigate a route from one point to another in an efficient yet human-friendly way.

"We design the human-robot interaction behavior inspired by human-human interactions," Guo said, "so that when we deploy our robots, the robot will navigate like humans."

Onboard sensors like Lidar or surveillance cameras help the robots distinguish between sentient beings and non-sentient objects and react to changes in crowd behavior in real time.

"We're trying to have the robots be smart in observing the environment, obtaining humans' movement patterns, and then adjusting their motion and navigation behavior so they can have seamless human-robot interactions," Guo said.

Teaching robots to blend into the crowd

One of the distinctions of Guo's research is its emphasis on understanding the human side of human-robot interactions.

Current robot motion planners are inadequate, Guo said, because current models of pedestrian dynamics on which they are based fail to capture the nuance and complexity of human motion behavior in crowds.

By using real-life pedestrian data to investigate how crowds of people move within an enclosed space, Guo and her team have been able to pinpoint both conscious and unconscious pedestrian and crowd dynamics behaviors and apply them to the robots' learning accordingly.

For example, people in crowds tend to instinctively follow others, Guo explained. When they encounter a crowd of moving people, "humans very unconsciously follow the leader," whether they know or can identify a specific leader or not.

Untrained robots have no such inherent tendency. Faced with insufficient options for charting a path through a dense crowd and no appropriate pedestrian dynamics data upon which to model their behavior, traditionally designed robots often simply freeze in place.

Guo's robots, in contrast, "are trained, in case it cannot find a way to cut through, to just follow somebody," she said. "Pick a subject. Follow it." By mimicking this human instinct to go with the flow, Guo's robots are able to reach their desired destination.

Robots also have no inherent sense of what constitutes a human comfort zone. Using real-world data as their guide, Guo's robots are trained to maintain an appropriate, comfortable distance from their pedestrian neighbors.

Guo's ultimate goal is for the robots to act in such familiar and innocuous ways that they simply blend into the environment.

A training algorithm is only as good as the data used to develop it, however—a lesson Guo and her team learned early on. At an early stage in the project, they had tried to develop a model base of inferred human motion behavior features with which to train the robots.

"Then we found out," she said with a laugh, "how good the model was."

The value of employing actual pedestrian data in their training efforts quickly became evident. Gleaning social affinity features from real human trajectory data has allowed the researchers to incorporate behavioral nuance and complexity into the robots' actions and decision-making processes that would not be possible otherwise.

Objective metrics of the robots' performance will include whether a robot reached its intended goal position and the smoothness of its trajectory. But Guo and her team are also testing for subjective performance metrics of the acceptability and predictability of the robot's movements, such as whether humans feel the robot is friendly and whether they feel comfortable sharing the space with the robot. These latter metrics will be determined via surveys of the human subjects involved in testing.

Robots in action

Both Guo's improved robot controllers and improved pedestrian dynamics modeling methods have the potential for use in public safety applications such as robot-assisted emergency evacuations and search-and-rescue efforts.

Such robots could also be deployed in busy, large-scale public environments like shopping malls, museums, stadiums, and airports as guides to assist with pedestrian regulation, crowd management, and navigation.

The robots also have the potential for use in more intimate settings, such as personal guides in elder-care facilities.

Such uses could benefit society economically by supplementing the human workforce while reducing labor costs, as well as by reducing the need for expensive crowd management infrastructure modifications.

Because Guo's algorithm is highly configurable, it's possible the robots could even be used to address public health needs.

One such application inspired by current events would be to configure the robots to use their onboard sensors to determine whether people are adhering to coronavirus pandemic social distancing guidelines. If the robot sensed people were standing too close together, it could emit an alarm or message and position itself within the crowd to effectively separate people to an appropriate distance.

Navigating the pandemic and beyond

Like so many projects this past year, Guo's project timeline has been disrupted by the pandemic. While the robots have been tested on a smaller scale under controlled settings in her lab on the Stevens campus, full-scale testing amongst uncontrolled crowds on campus and in shopping malls has been postponed due to coronavirus restrictions.

Despite the disruption, however, Guo and her team are being kept plenty busy, she said. Results of the current study are expected to be available some time in 2022.

By focusing on improving the ways robots move, act, and react, Guo hopes to instill the level of trust needed for humans to accept robots as a normal part of life. Doing so, she said, could expand what humans are capable of accomplishing. But first, people need to become accustomed to robots and how they can help.

"People always ask, Is it safe? Because people don't see many robots. To us [who work with robots], the robots are very safe. So I think it's a gradual acceptance by society. Our robots assist humans. They augment humans' ability. So I think if the general public sees more news about that, it will help them accept robots more."