These Are the Drones You're Looking For: Improved Design, Detection, Navigation, Cyberdefense

Multiple Stevens teams lead efforts to improve, defend and detect airborne and underwater drones

Drones are filling the skies.

Consumer giant Amazon will begin aerial drone delivery of small packages in two U.S. communities by early 2023. Five million consumer drones are shipped and sold worldwide annually, with more than 1 million already formally registered in the U.S. alone according to the Federal Aviation Administration.

Airborne and underwater drones are used for everything from video photography and the sampling of toxic volcanic gases to underwater structure inspection, remote search and rescue operations, and surveillance of disaster areas during and after wildfires, floods and tornadoes.

But along with the rapid rise of these unmanned robots come new security and privacy concerns.

“They can save lots of money and time,” says Stevens professor Hamid Jafarnejad Sani, a drone security expert. “But they can be hacked. The GPS can be spoofed. They are both excellent candidates for innovative uses and natural targets for attack.”

That’s why Jafarnejad Sani and other Stevens researchers are working on ways to improve drones’ capabilities; defend them from hacks; even detect drones with potentially malicious intent.

Cybersecurity on the fly; drones with ‘arms’

Some Stevens teams are working on defending drones, both physically and digitally.

Jafarnejad Sani, director of the Safe Autonomous Systems Lab (SAS Lab), joined Stevens in 2019 after working on safety solutions for unmanned aerial vehicles (UAVs) including a system that can intelligently help guide drones to emergency landings after their engines have failed.

“Drones have rapidly become a growing force in modern engineering, delivery services, and homeland security,“ he says. “And that means physical attacks on working drones, as well as strategic remote cyberattacks on them, will become more commonplace."

Focusing on cybersecurity, Jafarnejad Sani and his team work to program situational intelligence and awareness into networked formations and systems of drones. His lab includes an indoor flying range, Vicon motion-capture camera system and computation stations for the design, test and verification of both aerial and ground robots.

Using several quadrotors in the SAS lab, Jafarnejad Sani and Stevens doctoral candidate Mohammad Bahrami have recently conducted proxy experiments for the detection of stealthy adversaries for networked unmanned aerial vehicles.

In April 2022 Jafarnejad Sani received National Science Foundation support to further his work on attack-resilient vision-guided drones, in collaboration with a team at the University of Nevada, Reno.

“We will use control theory and machine-learning tools to identify and defend against cyberattacks, emphasizing high-dimensional visual data,” he explains, including the development of novel detection, isolation and recovery algorithms to help UAV fleets defend against and recover from hacks and attacks.

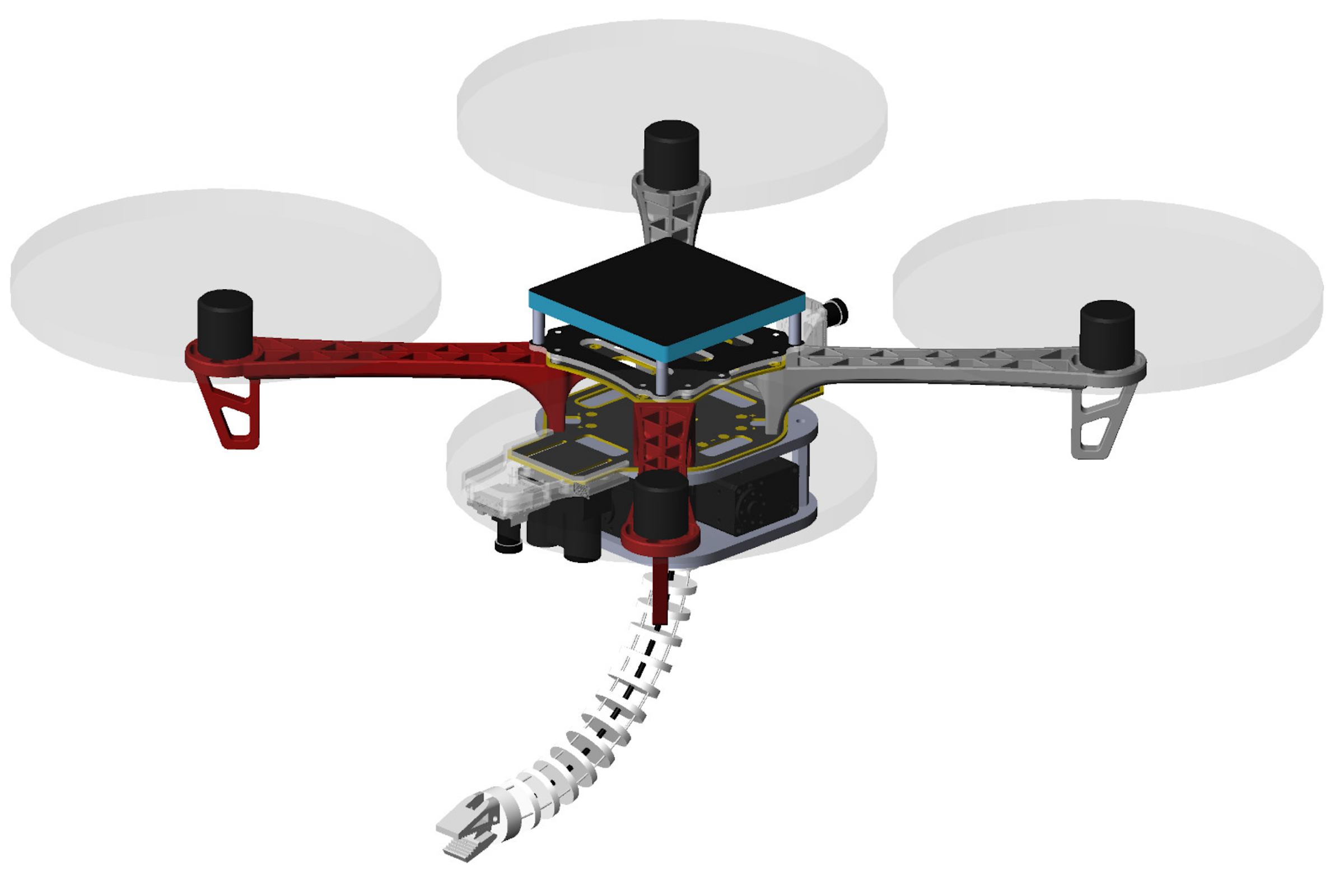

Jafarnejad Sani is also interested in extending drones’ capabilities. In a separate research effort, he collaborates with Stevens mechanical engineering professor and robotics expert Long Wang to design and test bio-inspired ‘arms’ that attach to flying drones.

“Drones will one day be able to lift, grip, toss and do many other things that a human hand and arm can do,” explains Wang.

Working with Stevens doctoral candidates Qianwen Zhao and Guoqing Zhang, the two researchers recently built and demonstrated low-cost, lightweight manipulator arms made with 3D-printed polymer components and nickel-titanium alloy cables. Key innovations included a bio-inspired, compliant arm design that can soften the contact impact for manipulation tasks.

In preliminary tests in an indoor lab environment simulating some real-world conditions, the manipulator arm showed promising capability in strength and stiffness.

“We will continue to work on this technology," says Wang.

Underwater drones that find their own way

Stevens researcher Brendan Englot is a leading innovator in the algorithmic intelligence that helps unmanned machines navigate. He’s working on several projects to help them do it better.

“Unmanned aerial and remotely operated vehicles can physically go places humans can’t, and they can do things we can’t — or don’t want to — do in person,” he says. “And they are getting increasingly good at learning to find their way through unknown environments.”

Englot’s team has developed an underwater robot capable of simultaneously mapping its environment, tracking its own location and planning safe routes through complex and dangerous marine environments in real time, using its own predictions about uncertainty to make increasingly better decisions.

His group’s AI-powered BlueROV2 autonomous robot explorer is the first deployed in a real-world marine environment that can learn on the fly, based on areas it has just explored and landmarks it has just encountered.

"This is a big step forward," notes Englot. "Underwater mapping in an obstacle-filled environment is a very hard problem, because you don't have the same situational awareness as with a flying or ground-based robot.

In tests, the robot utilized a custom-coded "active SLAM" (simultaneous localization and mapping) algorithm to thread its way through a crowded marina and harbor on New York's Long Island.

The robot leveraged sonar data to detect objects in its path and surroundings, mapping and learning as it traveled by forecasting uncertainty in new regions and identifying landmarks as it moved.

The result: safe, intelligent underwater routes that minimized danger while still acquiring useful information about the area around the robot.

"The robot knows what it doesn’t know, which lets it make smarter decisions," summarizes Englot.

Next the team will work to develop longer-lasting undersea missions, upgrading the system's onboard sonar capabilities and algorithms to enable mapping of increasingly more complex environments.

Identifying drones by listening intelligently

Another research team works on the challenge of rapidly identifying unknown drones by their sound signatures.

A decade ago, Stevens researchers developed a patented system known as SPADES to detect unseen human divers by their breathing sounds. The system works by first creating a catalogue of human breathing sounds, then algorithmically searching for matches in that library to ambient sounds in real time as arrays of microphones listen undersea.

Later the team, under the leadership of STAR Center and Maritime Security Center director Hady Salloum, expanded the SPADES methodology to listen for and identify everything from unknown planes or helicopters to furtive fishing boats and invasive insects hidden within cargo shipments and forest trees.

The patented system has even been licensed to industry partners, with additional interest from new partners (licenses are currently being negotiated).

“This technology has extremely wide applicability,” notes Salloum.

Now the Stevens group has turned its attention to unmanned aircraft systems (UAS), developing what it calls a Drone Acoustic Detection System (DADS). The system integrates a network of multiple-microphone arrays capable of detecting and tracking drones, using specialized SRP-PHAT (steered powered-phase transform) acoustic algorithms to process the acquired acoustic data.

A few members of the STAR Center team — which include researchers Daniel Kadyrov, Alexander Sedunov, Nikolay Sedunov, Alexander Sutin and Sergey Tsyuryupa — presented the work in June at the annual meeting of the Acoustical Society of America.

“A network of the Stevens DADS sensors allows UAS detection, tracking, and classification that other acoustic systems do not provide,” the group wrote in a paper accompanying its June conference presentation.

“Previous tests demonstrated an ability of a DADS network with three arrays to provide detection and tracking of small [drones] at distances up to 300 meters and harmonics… at distances up to 650 meters.”

More recently, the research group has worked on improving DADS by building stronger, larger microphone arrays. The original four-microphone, tetrahedral listening system has been expanded to seven microphones and reconfigured in a novel arrangement.

“New arrays could potentially enable detection at even greater distances from operating or incoming drones,” explains Salloum. “We are always working to refine and optimize the technology.”

Indeed, during two recent tests at a small private airfield in northern New Jersey, the new microphone array outperformed the original system as four different commercial drones zoomed, zigged, zagged, hovered and landed near the two listening arrays.

“The seven-microphone array outperformed the original setup in each case,” says Salloum, adding that Stevens’ researchers are now designing and testing an even larger ten-microphone listening array.

The team’s work has also enabled development of a new algorithm that can accurately predict a sensor’s detection range at different noise levels and in different environmental conditions.

“This work is important for homeland security,” adds Salloum. “With drones now possessing intelligence and advanced capabilities, acoustic detection becomes a useful technological tool in the nation’s defense posture.”