Stevens Team Uses Virtual Reality to Help Control Prosthetic Limbs and Restore Post-Amputation Sense of Motion

Biomedical engineering professor Raviraj Nataraj works with prestigious Cleveland Clinic team to publish pathbreaking advance

Amputation patients face long, difficult rehabilitation periods.

The key challenge for these patients is regaining the ability to perform daily activities after limb loss. Research focuses on objectives including restoring patients' sensation of movement (known as kinesthesia) and better control in the region of a missing limb by re-learning how to finely control muscles that could drive operation of prosthetic limbs and devices such as robotic hands.

Now a new collaboration between Stevens Institute of Technology and the world-renowned Cleveland Clinic offers hope for a quicker, more effective rehabilitation process.

The research, highlighted in a recent issue of the leading medical journal Science Translational Medicine, involves a relatively new type of intervention for upper-body amputation patients known as targeted muscle re-innervation. In this groundbreaking procedure, surgeons remove nerves from the vicinity of an amputated limb and implant them nearby in the chest.

By touching that region of the chest in specific ways, doctors and researchers found that patients can be provided the illusion of touch and movement sensation in the region of the absent limb. A certain type of touch at the chest, for instance, can give the illusion a missing hand is actually opening, closing, or even gripping an object. These patients can also learn thoughts and muscle motions that more naturally operate their prosthetics.

But the researchers knew that, in order to maximize the novel procedure's effectiveness, they would need a regimen of new exercises that efficiently teach control and motion.

That's where biomedical engineering professor Raviraj "Ravi" Nataraj came in. Nataraj, a graduate of Case Western Reserve University who joined Stevens in 2016, began assisting on the project while a postdoctoral research associate at the Cleveland Clinic.

"They were initially taking more of a neuroscience approach in evaluating the system," he recalls, "and I was brought in because they needed additional engineering expertise."

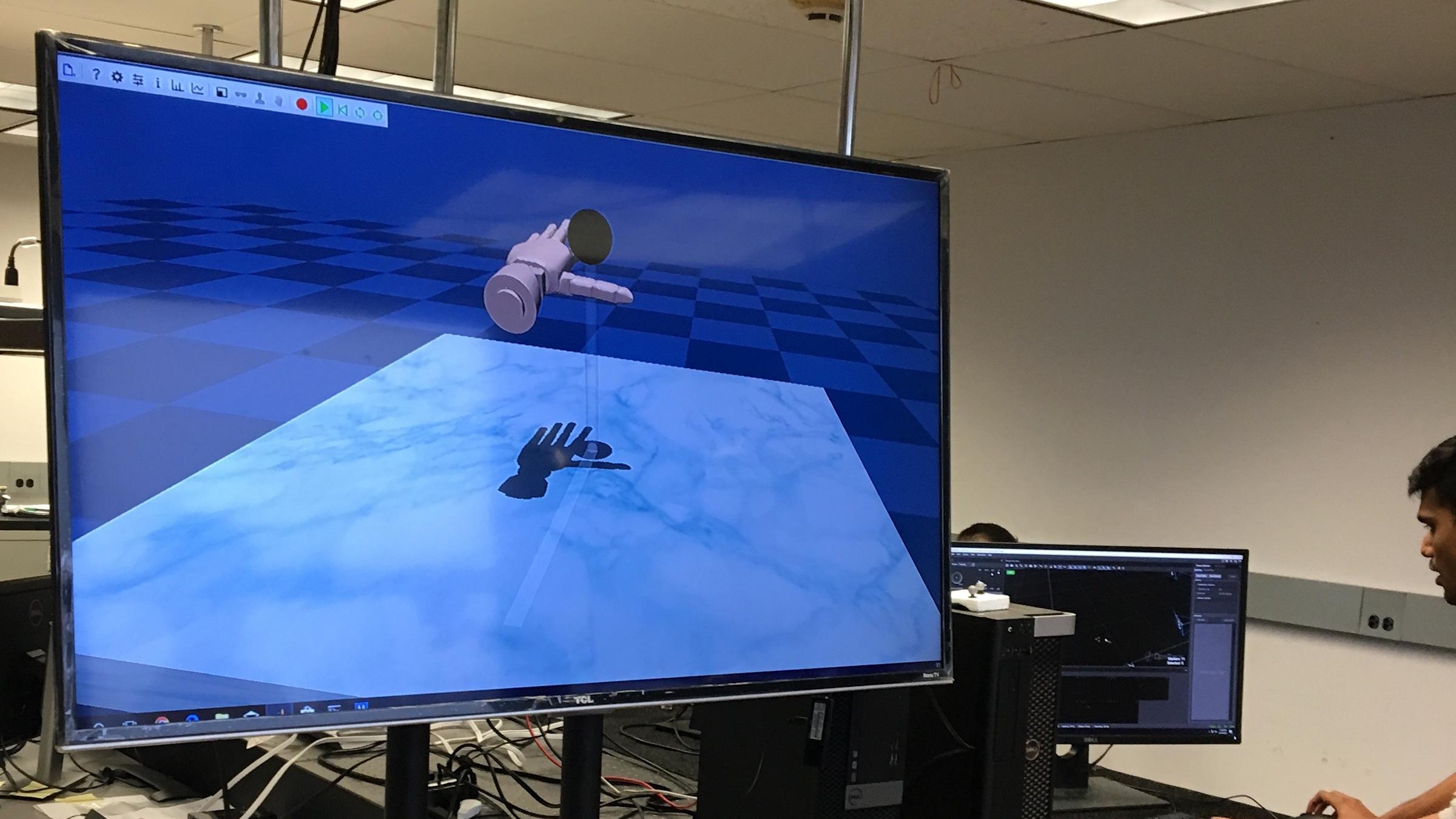

Programming in MATLAB and the MuJoCo HAPTIX physics engine — a specialized language developed as a DARPA initiative with a particular strength at capturing contact interactions between various objects — Nataraj began developing a virtual reality (VR) platform to help patients undergoing the new experimental surgery retrain muscles and nerves to control prosthetics more naturally.

He did it by utilizing a 'virtual hand' modeled after a real prosthetic hand, then programming various challenging tasks where an amputation patient attempts to control the hand.

"I help develop better feedback control methods for the devices, so that users can better control them and feel more connected to them," he says.

The battery of exercises Nataraj designed includes a series of fine motor-skill tasks for the virtual hand, involving the grasp of various virtual objects (spheres, blocks and cylinders) and completing manipulation tasks as quickly and effectively as possible.

"Down the line, we hope to achieve more complex tasks, such as catching a ball. That would be true cognitive integration, across multiple degrees of freedom," he notes.

Refining the VR, gaming the system

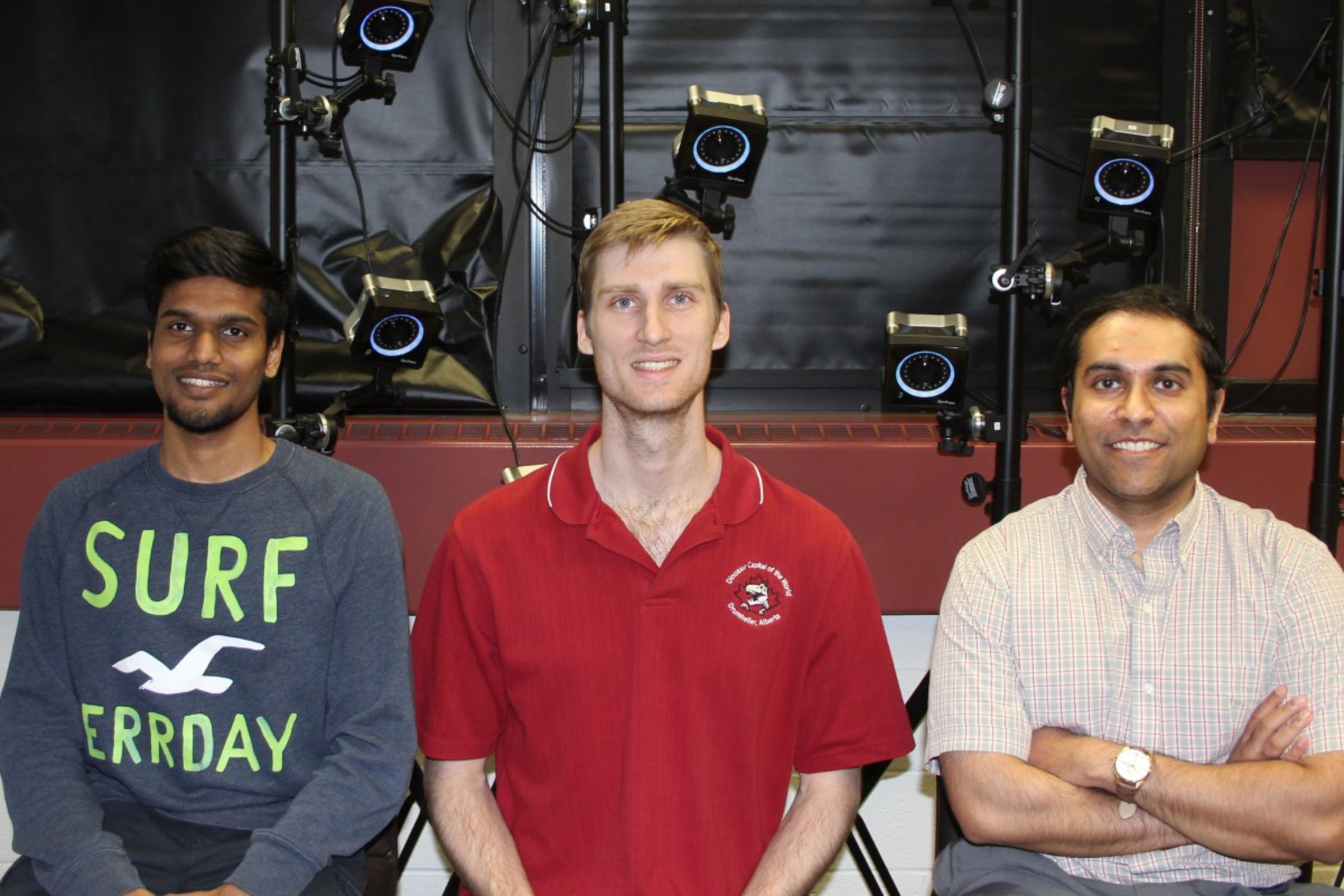

In his Movement Control Rehabilitation (MoCoRe) Lab on the Stevens campus, Nataraj and his team of graduate students continue to develop new VR tasks for testing with various clinical populations.

"We try to enable better cognitive integration during movement rehabilitation," he explains. "We know we can restore your illusion of motion. So, can we now use that illusion and project it onto a prosthesis toward a functional end? Can we vary device operation to augment cognitive agency — the feeling you are the true author of your own movements?"

In one setup, reflective markers on a glove tracked by infrared cameras power the virtual prosthetic hand as subjects attempt to touch a ball on a screen. A sound alerts them to success — but the team can speed up or slow down the hand's motions to make the task more difficult and train subjects to exert finer control over time. Interestingly, systematically introducing color changes and a light vibration of the virtual hand can actually enhance learning of the task.

In another experimental setup, doctoral candidate Sean Sanford develops a task of lightly pinching two bars to develop small-force movements in prosthetic wrists and fingers. He is also developing a visual-learning experiment involving squat-type exercises for use in lower-body rehabilitation with implications in sports and for helping those with mobility disorders.

The timing and quantity of feedback given to patients each appear to make a significant difference in the learning process.

"If you give all the feedback in real time, as the task is being done, there is some benefit," explains Sanford. "But if you withhold some of the feedback until the attempt is over, and then give it all — in the form of a visual graph charting how you just did, in this case — immediately after completing each trial, patients learn to control their motions on their own faster."

"These findings have implications for designing better platforms for rehabilitation training," adds Nataraj.

Doctoral student Aniket Shah focuses on robotics engineering, particularly prosthetic controls. He's working with the VR system and also creating a prototype tactile 'learning glove' that will give enhanced feedback when a successful motion or grasp has been performed by, for example, changing color brightly or suddenly as the task is achieved.

And master's student David Hollinger works on programming "reward" into the VR system, depending on individual patients' characteristics and personalities. He also hopes to help extend the platform to stroke patients in the near future.

"Some patients believe they can control their health, while others are more oriented toward believing external factors control their health," explains Hollinger. "I work to tailor the VR experience forpear on screen could trigger a pleasant memory in a particular subject who once played the sport — producing potentially better rehab outcomes.

Next steps: clinical tests at Bronx VA center

This summer, Nataraj's team will begin testing the improved VR platform on new patients, working with pilot clinical subjects at the James J. Peters VA Medical Center in the Bronx.

"We are fortunate to have begun building a relationship with this VA medical center," says Nataraj. "It will be exciting."

The team will also soon apply for funding to adapt and extend the VR platform to such applications as lower-limb exoskeleton control and rehabilitation from paralyzing spinal cord injuries.

"We have motion work and force work happening," sums Nataraj. "And our ultimate goal is to put this all together cohesively to better train individuals on complex yet typical tasks of daily living. That's where we're heading."