Long Wang Awarded $413K in SBIR Grants from U.S. Department of Defense to Develop Biomechanically Accurate Digital Human Model for Use in Tactile Human-Robot Interaction Simulations

Equipped with advanced biometrics, the model could help develop autonomous robot capabilities for use in military location and extraction efforts, emergency response and healthcare

Despite the elaborate animations depicted in CSI-style popular entertainment, digital human models for simulating human-robot interactions in reality are often quite crude.

"You'd be shocked how simple some human models are that are available in off-the-shelf simulators," said Stevens Institute of Technology mechanical engineering assistant professor and Advanced Robot Manipulators Lab principal investigator Long Wang. "For example, they don't have skin. Everything is hard, like a steel man."

Such overly simplistic models are incapable of gauging or providing accurate feedback on how an actual human body responds to robotic manipulation, such as under conditions in which an unconscious person might be grasped and dragged by an autonomous robot from a dangerous situation.

The U.S. Department of Defense (DoD) Defense Health Agency has awarded Wang, in partnership with Corvid Technologies, $412,993 in grants to develop a biomechanically accurate digital human model with advanced biometrics capabilities for use in simulations for employing autonomous robots to locate and extract wounded soldiers from high-risk environments.

Granted as part of the DoD's Small Business Innovation Research program, the competition-based awards represent the first two of potentially up to three phases of federal funding for projects that partner small businesses and nonprofit research institutions to develop innovative solutions with commercialization potential that address current U.S. needs.

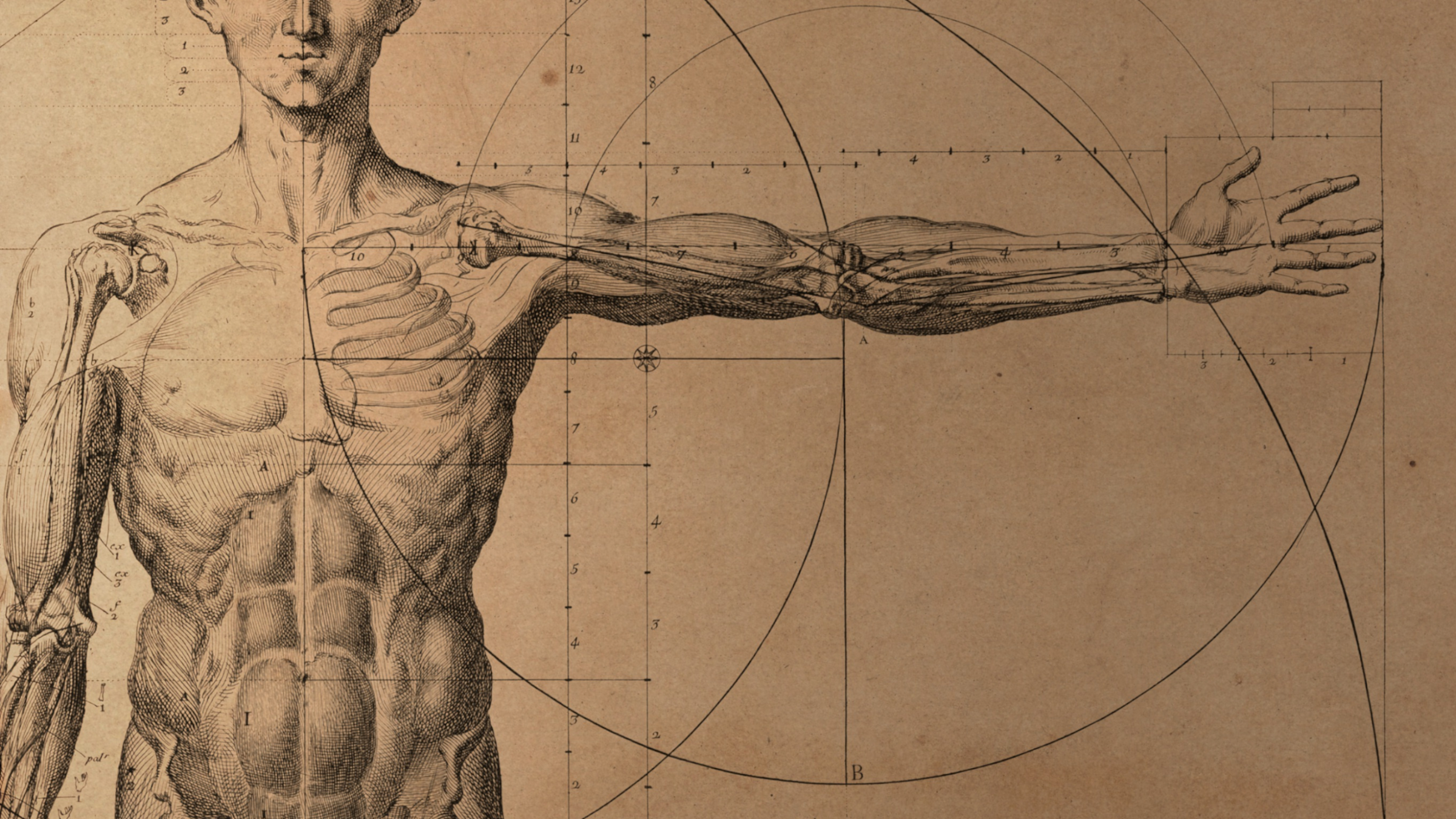

Titled "Digital Human Model for Use in Simulation Environments for Tactile Human/Robot Interaction," the project will address a critical shortcoming in current simulation technology and be capable of measuring stress and strain metrics that result from human interaction with robotic manipulators, including contact forces, joint torques and distributed loads when a person is grasped, lifted, dragged or repositioned.

By providing accurate musculoskeletal anatomy and force response and stress metrics, this advanced human model will ultimately make it possible to design and develop improved robotic manipulators capable of performing physical interactions with humans with high degrees of safety and efficacy.

To build a better robot, build a better human (model)

As advanced as robotic technology has become, Wang suggested, it is still limited by our understanding of the effect robots have on human anatomy. To build a better tactile robot requires building a better human model to design, develop and test it.

And doing so requires returning to first principles — such as that human bodies are not made of metal, as those off-the-shelf simulators might suggest.

"By a biomechanically accurate model, we mean that we know humans are made of bones inside and have muscles between the skin and bones," Wang explained. Unlike steel, each of these layers of human biological materials moves and responds differently when grasped or manipulated and is capable of being cut, bruised, fractured, broken — even detached entirely. The potential movements and degrees of freedom of the human body's limbs and joints are not limitless and require an anatomically realistic model that quantifies this reality.

While some more advanced models do exist that represent the musculoskeletal aspects of humans, they lack the capabilities for measuring how outside forces act upon the body. The need to develop technologies that are not only innovative in themselves but can also characterize and prioritize human safety is of the utmost importance.

"In this project, in terms of safety, we primarily care about two things," said Wang. "One, when the robot hand grasps a human arm, we want to make sure that we're not squeezing too hard on the skin or applying force and pressure on the human arms so that it does permanent damage. The second part is joint torque — that when the robot moves, we want to make sure that it's not moving out of range too far for a human."

The developed model will represent accurate human anatomy, density and mass and will provide real-time metrics for the impact of a seven-degrees-of-freedom robotic arm and gripper (a peripheral device similar to a hand) on the digital human model's skin, bones, muscles and key joints during tactile interaction. This flesh-and-bone digital model will be capable of measuring and reporting reaction forces generated when each human joint or limb is grasped and manipulated into a new position. It will also provide accurate musculoskeletal dynamics as manipulation is taking place.

Ultimately the robot model that this human model will interact with will need to accomplish different actions of grasping, lifting, repositioning and dragging. By focusing on the realities of human anatomical systems and the reactions that occur, the human model will help quantify the safety of a particular robotic movement or technique: for example, that a robot's grip was too tight, its lift too quick or forceful, or its rotation of a human's limb beyond a person's natural capacity, thereby resulting in permanent or even fatal injury.

The human model will ultimately be integrated with common open-source simulation environments for robotic motion planning, and its results will be exportable so that they may be used to refine motion planning algorithms.

In addition to reducing risk and increasing the capacity of medical personnel in high-risk military situations, the resulting human modeling technology holds potential for use in simulations for search-and-rescue and emergency response, industrial robotics and health hazard assessment tools for injury prevention.

Wang also sees potential for the technology to shore up labor-intensive but often under-resourced healthcare industries, such as nursing home care.

“For example, if a robot could help safely extract a soldier onto a stretcher, then it should know how to safely help an older person get up from their bed, transfer them into a wheelchair, or assist with personal hygiene or other daily activities,” he said.

Succeeding in phases

The DoD-sponsored competition is being conducted in three phases, with researchers’ success in each phase determining whether they will advance to the next phase. Phase I began in March 2022.

For the initial, six-month phase, Wang and Corvid were selected and awarded $150,000 in total ($52,000 to Wang) to conduct a feasibility study and design a proof-of-concept model that simulates and measures preliminary stress and reaction force metrics. This initial prototype and final report were submitted in September 2022. Findings from Phase I are being developed for publication.

Recently selected by the U.S. Army's Telemedicine and Advanced Technology Research Center to advance to Phase II, Wang and Corvid will use the data and advances made in Phase I to refine and inform design and development of a computational software framework that can support the safe planning and control of the robotic extraction of injured people. This framework will include a biomechanically accurate human model, a robot model and the tools capable of carrying out real-time computation of the physical interactions between the robot and human.

Phase II began in March 2023 and will run for two years. Funding for this phase totals $1 million, with $360,993 awarded to Wang. Thus far, Wang and Corvid have been granted a combined total award for their successful advances into Phases I and II of $1.15 million.

Should their project be selected to advance to Phase III, the team will pursue commercialization objectives, using government feedback from Phase II to develop the framework for a wider variety of potential clients, uses and industries.

Addressing a critical shortcoming

The partnership between Stevens and Corvid developed for this project, said Wang, builds strength upon strength, optimizing and unifying each of the two sides of the human-robot interaction puzzle by what each team does best.

"Corvid has a high-fidelity human model, and we have had robotic simulation development going on at Stevens for some time," Wang said. "It's a perfect match."

But with so many advances made in robotic technology in the last 50 years, how could something as integral and obvious as the need for an accurate human model for simulating human-robot interactions have been neglected for so long?

"I don't have good answers to that because I was shocked, too," Wang said. "It's a great question. I don't understand why there isn't already an accurate human model."

While he can only speculate, Wang hypothesized the most likely reasons for the shortcomings of current human models are twofold.

For one — and most simply — even though the interaction between humans and robots "is a trendy research topic," he explained, developing accurate digital human models based on the various parameters of a human body "is actually a lot of work."

The second reason, Wang suggested, is because, up until quite recently, robotic technology had not yet advanced to such a stage where physical contact with a close degree of intimacy between humans and robots was really a viable option.

"If you think about it, the need to interact with a human — for a robot and human coexisting in the same workspace — that's actually something new," Wang said. "In the old days, all of the robots had to be caged in a cage-like cube. No human was supposed to be in there because you didn't know what the robot might do or what would happen if maybe there was an error."

An example of such an error occurred in Moscow in July 2022, when a chess-playing robot fractured a seven-year-old's finger when the child moved his chess piece too quickly for the robot's programming.

Although a relatively minor incident, this example demonstrates the importance of instilling robots with an understanding of the basics and limitations of human anatomy so they can act accordingly. Taken to an extreme or in high-risk or industrial settings, the results of a lack of such knowledge could be fatal. Developing a human model that realistically moves, responds, injures and even breaks like a real human is the first step toward developing tactile robots safe enough for people to interact with in everyday life.

"Our first priority," Wang said, "is how do we make sure that the human that we're helping or we are physically interacting with is always within a safe margin."